GPT-4 reminds us how much AI research owes to physics

Artificial intelligence and large language models like GPT-4 are impressive. But the technology used to create such models only exists due to the prior achievements of physics, particularly quantum physics.

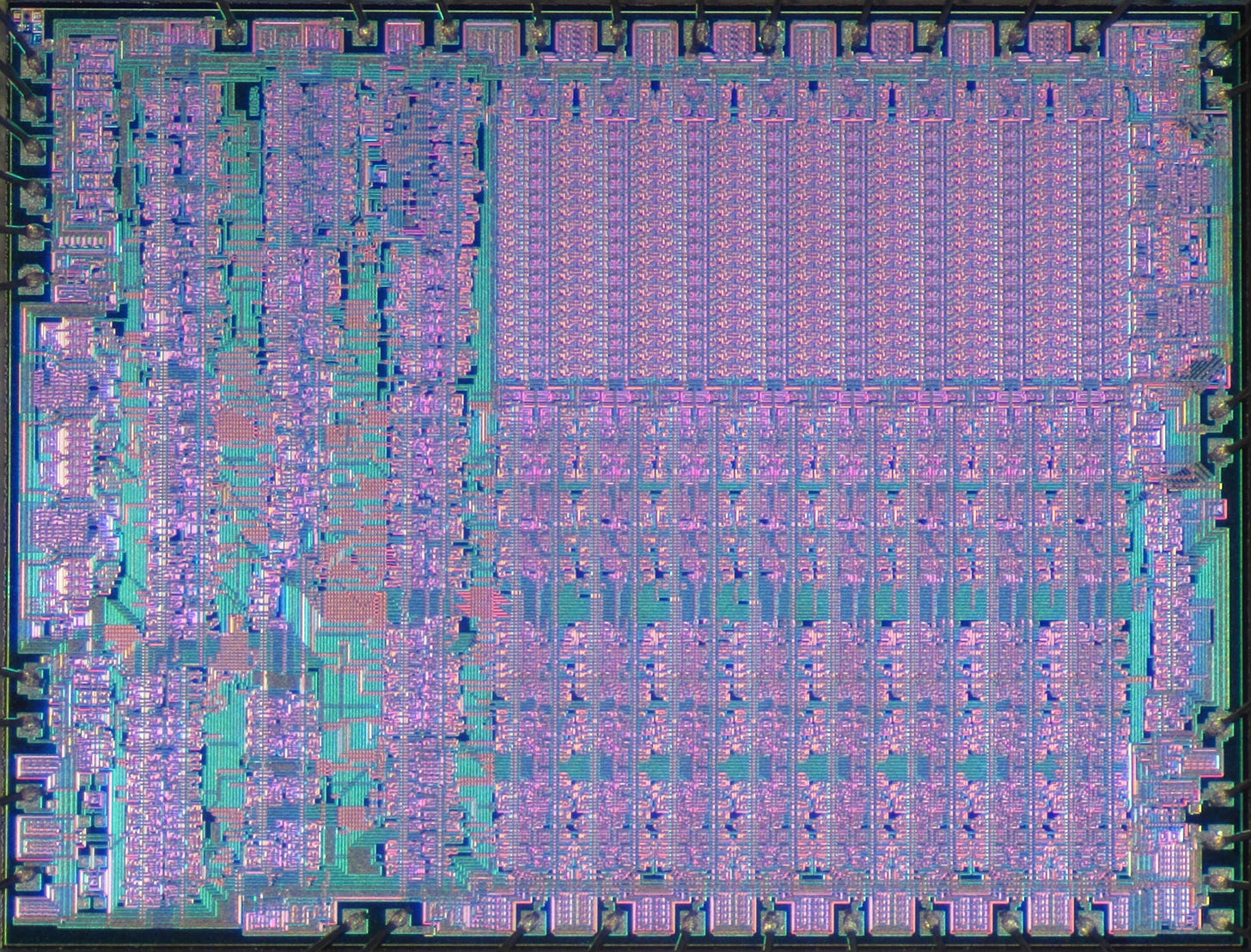

Transistors, small devices that significantly improve computing power, would not have been developed if not for the Quantum Revolution initiated by the research of scientists such as Planck, Einstein, and Bohr. This line of research led to the creation of the high-performance computers necessary for training GPT-4.

The training of GPT-4 required tremendous computational power, with scientists estimating it required a median of 7.6 x 10^(24) FLOPS (floating point operations per second). This level of performance is only made possible by the advancements in computing technology brought about in the era of Moore’s law.

Data storage is also important for large language models, and it relies on quantum principles like the electron tunnelling effect in flash memory.

The algorithms used in large language models also make use of mathematical tools originally developed for studying the physical world. For example, the error backpropagation algorithm is based on the chain rule of calculus, which stems from studying motion and change in the physical world.

GPT-4 reminds us that AI scientists are standing on the shoulders of giants.